An AI Blitz: Highlights from Brain Co.'s Hackathon 2026

Featured projects from our company-wide AI hackathon.

Member of Technical Staff @ Brain Co

Member of Technical Staff @ Brain Co

Featured projects from our company-wide AI hackathon.

Member of Technical Staff @ Brain Co

Member of Technical Staff @ Brain Co

The year came out swinging. Frontier labs are stepping on the gas in a tightening race for dominance, while ClawdBot was unleashed on the internet. At Brain Co. we don’t sit on the sidelines - we held our first-ever Hackathon. We carved out two days for the whole company. No product spec, no roadmap, no approvals required. The themes: reusable demos, building blocks, AI leverage and automations that help us compound over time. We received 15 project submissions from teams of 2-5 people.

Teams presented their creations in front of the rest of the company, and what was supposed to be 5-6 demos went well over time in a showdown packed with laughs, probing questions and novel ideas. We are a company of builders after all and it is commonplace for non-technical folks to spin up their own internal tools with AI and share them with others. We should have foreseen this.

Projects ranged from “You’re doing it wrong!”, an app that reviews how you’re prompting LLMs and helps you learn by suggesting improvements - to “Best PDFViewer”, a PDF viewer that leverages AI to link references between pages, has built-in web and agentic search, and allows users to share bookmarks, notes and highlights with each other.

Some projects were obvious extensions of our client work and moved forward organically. Others required conviction — they didn’t have an immediate customer tie-in, but we believed in the underlying thesis. Examples of this are Tenor, an AI oracle that runs locally and securely on your machine, and AIrene, a new paradigm in candidate sourcing named as an homage to our incredible Head of Recruiting, Irene Joo. These weren’t side experiments. After a focused push, they went into production and are now used across the company.

Let’s dive deeper.

In a world of competing priorities, we have to pick our battles - we can’t do everything at once. We are growing fast, and deliberately choose to stay focused on hiring the right talent to deliver value to our customers. We enable our people and pods to move fast and autonomously, empowering them to make their own decisions and reducing blockers wherever possible.

The result is a structure that is optimized for speed and value creation at the cost of fragmentation and a communication overhead. As we enter scaling mode, the gap between the company’s general updates and one’s daily work and interactions will only grow. This is exacerbated by the fact that our company is distributed all around the world. We are not willing to compromise on any of these, so we set out to find a way to communicate knowledge across the company more efficiently.

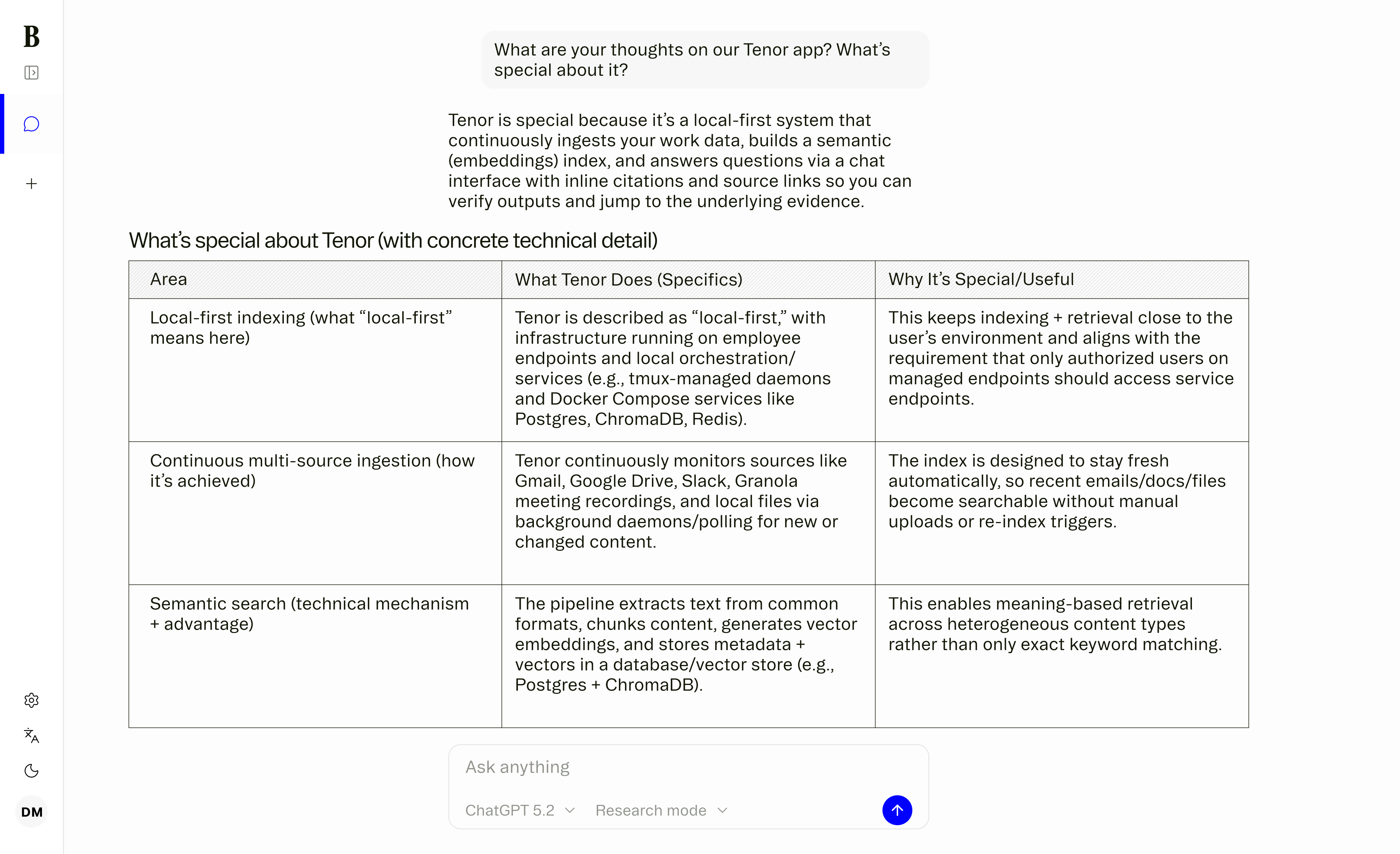

Rather than attempting to force structure to the way our team reports their work - a rather bureaucratic and naïve objective for a company of our size and growth trajectory - we set out to build a tool to organize it for us. Tenor is your personal, local-only knowledge hub. It fetches data you have access to from a diverse range of sources and indexes it. You can chat to Tenor, and it will leverage this data together to call LLM services to provide you with the answer you are looking for. Save for the LLM calls which are configured to be zero data retention, everything stays in your computer; there is no need to stress about your personal data being leaked while you sleep.

Tenor can see whatever you (and only you) have access to. This means all public company information and your own private one. Copious amounts of Drive files? No problem. No time to catch-up on your Slack channels? No problem. Tenor will take care of making sense out of it all so that the next time you want knowledge or news about a specific topic, you don’t have to dig for it or ask someone to provide you with an update. All of this without exposing your sensitive data to some cloud service you have no control over.

Our first aha! A moment came during the Hackathon when we were trying out the results of our first iteration. At this point in time we had it only set up to retrieve data from Google Drive and our e-mail. One of our engineers was asked to provide our GTM team with dots on the next steps and blockers for a project. Tenor’s answer to the question “what are the next steps for this project and are there any blockers?” was spot-on - this had cut a 10-minute task into a few seconds successfully.

Since then, Tenor has only improved. It now integrates with 6 different tools, and more integrations are in the works. With RAG optimizations and tweaks to our AI pipeline, we have made solid improvements to the quality of the responses. It’s faster, snappier and more robust, and has different modes (Fast, Thinking and Research) that direct the model to perform different amounts of work.

We are rolling this out to the rest of the company, but this is only the beginning. There are many more tools to integrate, file types to make sense out of (e.g. photos and videos) and a few more optimizations to make. With this, we haven’t even touched on voice recognition so that you can talk to Tenor, nor the notion of allowing it to make write operations to your data. In the future, we see Tenor as the hub where you operate rather than a tool to augment your work. You don’t just use Tenor to make sense out of your data. You use Tenor to make informed decisions that the app executes for you.

One of the most challenging aspects of growing a startup is finding strong candidates to consider. Building a strong top-of-funnel is crucial to our recruiting efforts. However, we have generally been unsatisfied with the tools available, especially given their cost. Some identify promising candidates initially but lose effectiveness over time, while others fail to surface profiles aligned with our needs. Our recruiters often voiced frustration that existing tools were not flexible enough to reflect how we actually evaluate talent.

During the hackathon, we decided to take a stab at developing an experimental candidate discovery tool: AIrene, named after our own Irene Joo. Inspired by the capabilities of coding agents, we believed LLM systems could also be leveraged to assist with candidate discovery. In this section we’ll outline how we approached this problem.

Our first realization was that job descriptions alone are not sufficient grounding for a sourcing system. While they describe required skills and responsibilities, they are often too broad to capture the nuanced patterns we apply when evaluating candidates. To address this, we introduced a new internal artifact we call steering instructions.

Steering instructions are written by our recruiting team and encode patterns our recruiters often consider when reviewing candidates. These may include lines of logic such as: giving additional weight to candidates who have shipped customer-facing AI products with measurable outcomes, recognizing founder or early-employee experience as a strong execution signal, and distinguishing between applied ML product work and infrastructure- or research-oriented roles. Encoding these more semantic guidelines allows the system to better reflect how we actually assess fit.

Our system is composed of a series of task-specific LLM calls that follow an iterative sourcing process. We quickly realized that static querying would not be sufficient, so the system needed to refine its strategy as it learned from results.

To enable this, each LLM call has visibility into prior progress. We implemented a “research journal” mechanism: at the end of each iteration, the system summarizes which search strategies produced high-quality candidates and which did not. These reflections inform subsequent rounds, allowing the system to explore different search strategies and surface additional candidate profiles.

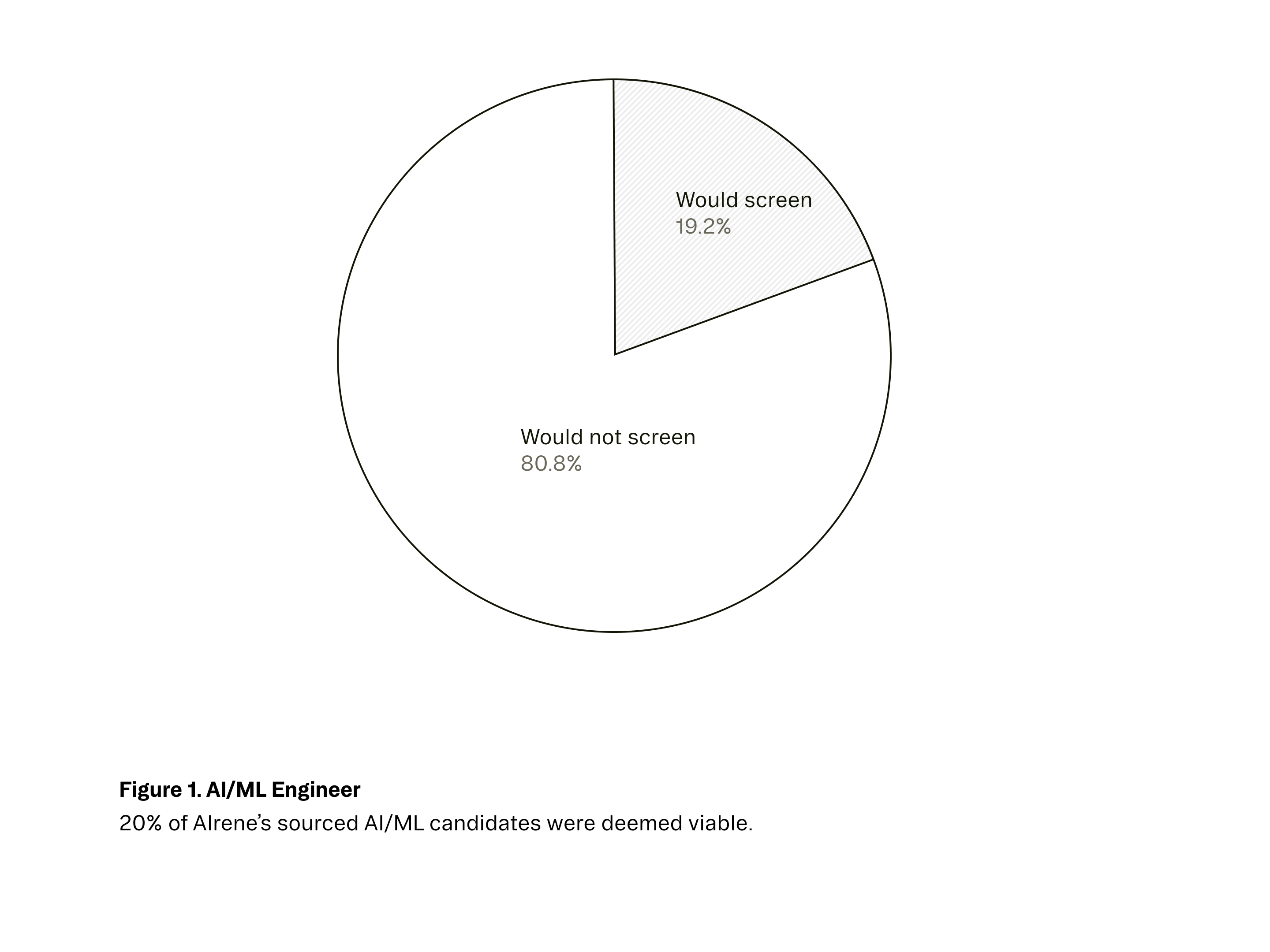

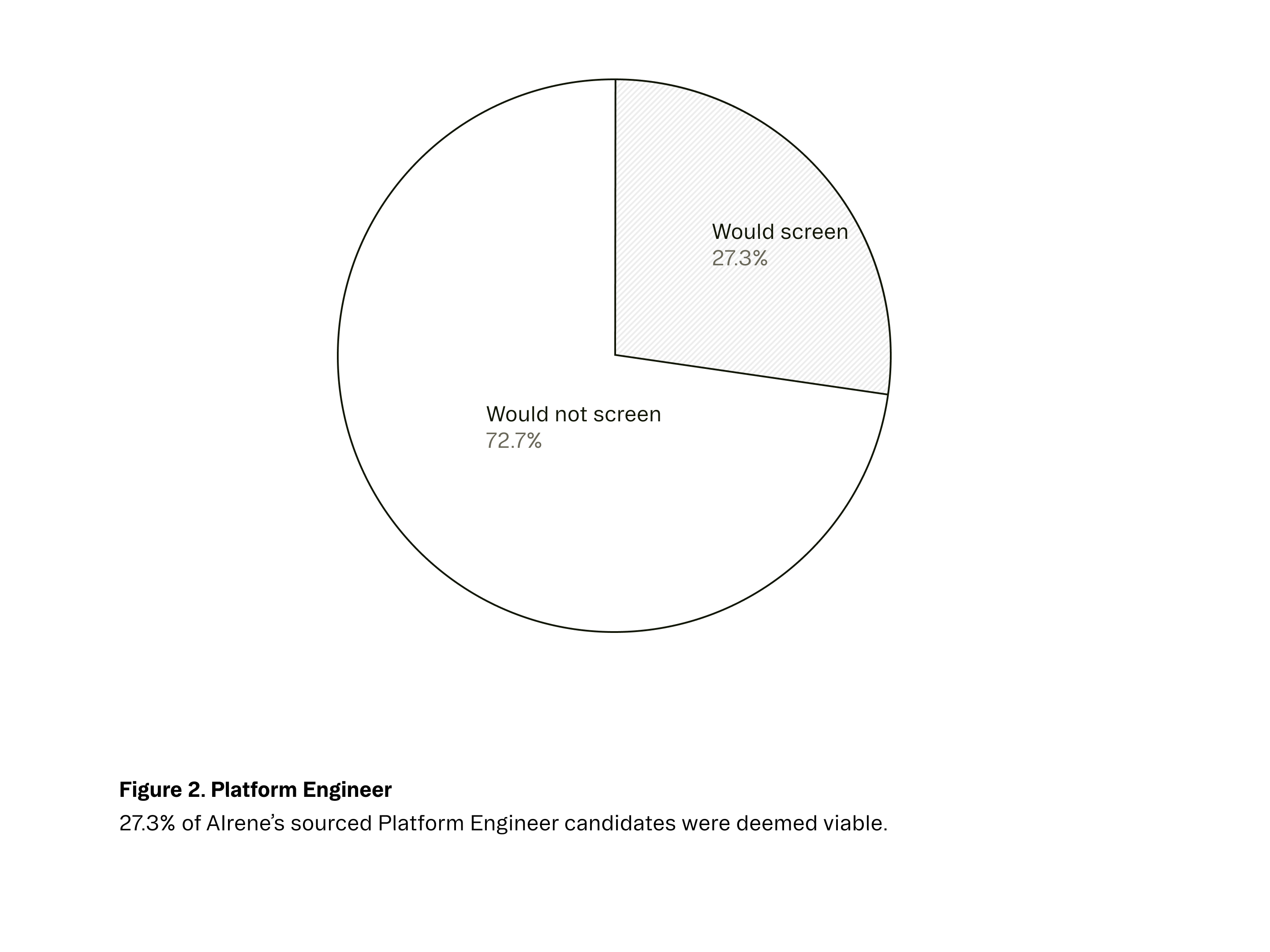

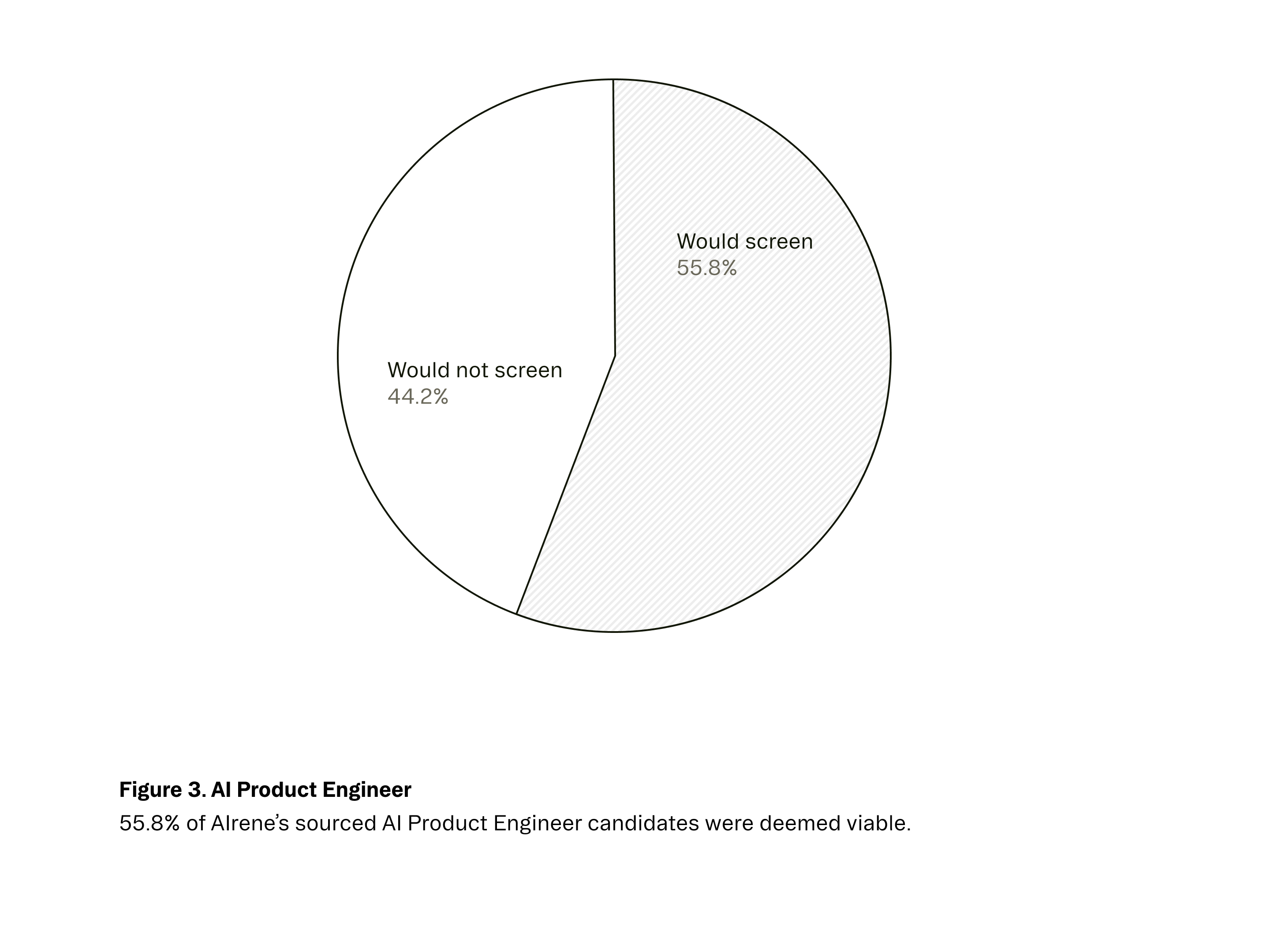

We are encouraged by the early results and continue evaluating AIrene as part of an internal experiment exploring how AI might support recruiting workflows. Below are screening rate results for our engineering roles. In each chart, “Would screen” indicates that the system surfaced a candidate our in-house recruiters said they would reach out to.

Both Tenor and AIrene began with operational friction.

As we grow, information becomes harder to navigate and harder to trust with external systems. Tenor addresses this by organizing the data people already work with, in the tools they already use, while keeping it local and secure. It supports how our team operates instead of asking them to change their behavior.

Hiring presents a different challenge. Evaluating talent involves nuanced judgment that traditional sourcing tools struggle to reflect. AIrene was an effort to make that judgment more explicit and to use it in a structured way to support our recruiting process.

Tenor and AIrene came from practical needs inside the company. The hackathon provided the space to build solutions around them.